It’s been a busy 8 weeks at Equinix in Melbourne and now the servers are finally online and running customer applications.

Changelog

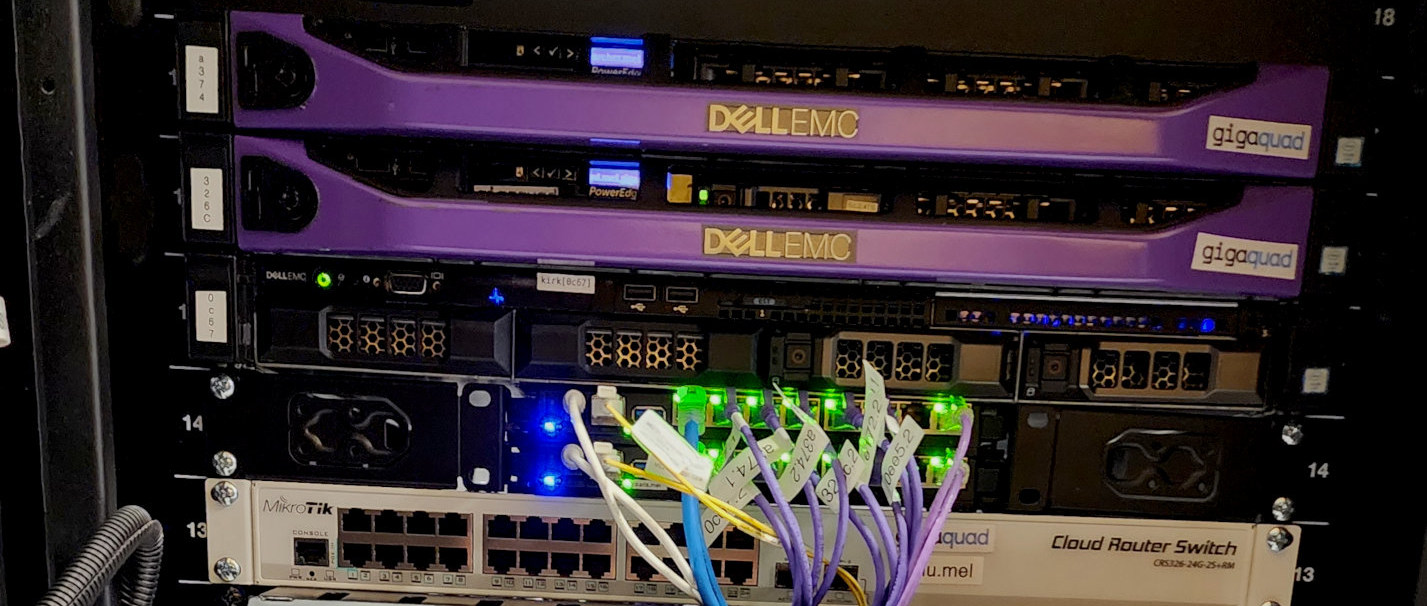

Equinix ME2 colo deployment

The deployment of equipment in the colocation space at Equinix ME2 in Melbourne continued these past two months. Racking the equipment was very speedy, however we encountered difficulty with our networking equipment/toplogy immediately after that. It’s taken 6 weeks to get resolved and now it’s 100% online, with the following network being what was arrived upon:

The difficulties were caused by inappropriate selection of hardware and resulting incompatibility with the available routing topologys offered by the upstream provider. Where the Mikrotik RB5009s are placed in the above diagram, there were initially CRS326 switches. These switches have only 1 CPU core, so were inappropriate for any layer 3 routing and they also appeared to have buffer exhaustion and consequent packet loss issues when using a 10G fiber transceiver. The plan was therefore to replace CRS326s with two Mikrotik CCR2004 routers which would have the CPU capacity for more Layer 3 work. However no suppliers had these in stock and since there was time pressure, the RB5009s were chosen as an interim solution. They are performing admirably and the constraints they impose will have a positive impact on the topology in long term by making it more generic and not dependent on proprietary protocols like MLAG.

Migrations of customer applications has now begun.

Observations/specifics:

- A custom script has been added to the VRRP setup to improve it’s awareness of Layer 3 failure.

- Linux Bonding Mode 1 works really well, since Mikrotik Router OS is Linux under the hood. It’s also very easy and transparent to setup with netplan on the Ubuntu hosts. It’s interesting as it simplifies the topology when compared to MLAG. Also ensuring that the bonds continue to operate at Layer 2 when they reach the router/switch. This makes the topology less CPU intensive in the client access segment ensuring a more generic and sustainable topology in the long-term. Sure, since it’s an ‘active-backup’ model, the links are not aggregated and you don’t get the 2x speed over the link. However, the primary purpose of the bond is for redundancy, and it provides that while also remaining generic and transparent.

- Using both ARP and MII monitoring from the host side on the bonds ensures that the host will switch to the alternate router in the event of a Layer 3 network failure. This works great in symphony with the custom VRRP setup.

- Using BGP on the uplinks to the colo provider has worked well, with one exception: By design, there are no routing preferences on the colo provider’s side which means that both routers receive traffic destined for the servers. There is not issue in terms of connectivity, and routes will failover in the event of a failure of a router, however it does exclude the ability to use a stateful firewall on the BGP facing routers. There is a plan to mitigate this with some custom scripts which divert traffic to the primary router in normal conditions.

Hetzner Falkenstein dedicated maintenance

One of the dedicated servers in Germany required a motherboard replacement which led to some downtime as well as peculirarities around reconfiguring the network, due to a change in the names and number of NICs.

Originally posted at https://www.dfoley.ie/blog/building-gigaquad-changelog-mar-apr-25